Hi, i am evaluate your charting component and try Digital analyser performance demo and put rendering to software and it looks like its missing 99.9% of points even resampling is set off? so what iam doing wrong?

- Ime Parsaa asked 3 years ago

- You must login to post comments

Hi there,

I’ve re-read your question a couple of times & did a little testing this afternoon. I think I understand what you mean now!

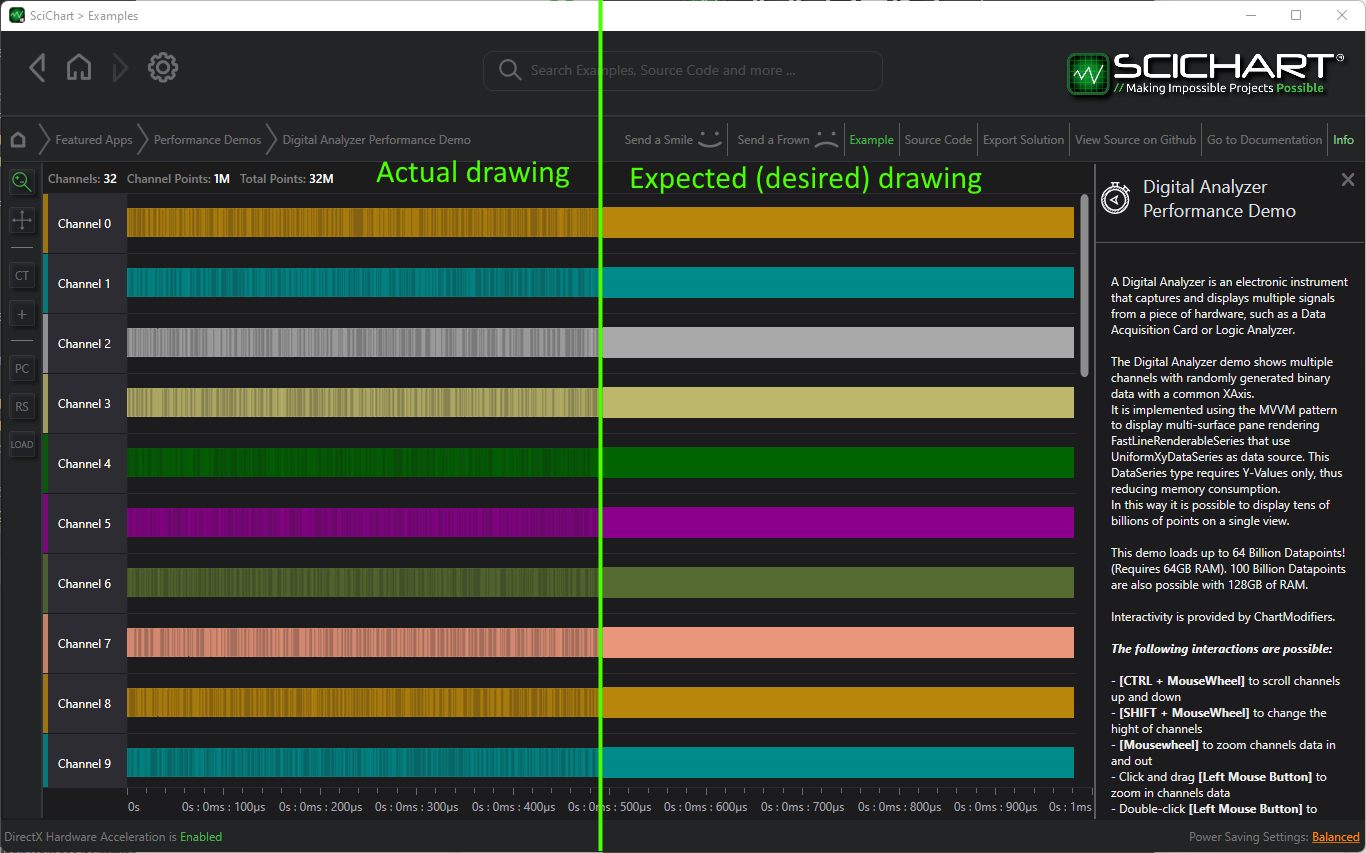

What you mean is – your expected vs. actual drawing is like this.

Is this correct? (Can you confirm what you are expecting when fully zoomed out?)

If so, I understand the reason for this rendering and have an easy workaround for you.

The reason is an artefact in our drawing engine. Also we have four renderer plugins, the software renderers, one of them uses integer fixed-point coordinates and a fast alpha blending algorithm. For this reason we recommend the Visual Xccelerator renderer plugin. This is the most accurate renderer plugin with the highest performance.

Read about the different Renderer Plugins in SciChart

All the data is there & the waveform is faithfully reproduced. However to get the desired effect you can try these workarounds.

- You can either set FastLineRenderableSeries.StrokeThickness = 2. You could dynamically change strokethickness based on zoom level

- Or, you can set FastLineRenderableSeries.ResamplingPrecision = 1 or 2

What does this second parameter do? It adjusts the aggressiveness of performance optimisations when the chart is drawn (see documentation). The default value is 0, which will result in a faithful (accurate) representation of the waveform. For extremely dense plots you can get higher quality with a value like 1 or 2.

I have tested and with FastLineRenderableSeries.ResamplingPrecision = 2 and FastLineRenderableSeries.StrokeThickness = 1 you get a perfect result (as expected), and still the chart is performant when there are 32 billion, or 64 billion datapoints.

Here is a video showing the difference on a 4K Screen.

Let me know if this helps!

Best regards,

Andrew

- Andrew Burnett-Thompson answered 3 years ago

- last edited 3 years ago

- You must login to post comments

Hi there,

Thank you for getting in touch!

cleary its graphical problem when it gives wrong directional lines and leave cap between lines,

OK. this doesn’t sound right. Our chart library is designed to render the correct visual no matter what data you give it.

We have some intelligent algorithms internally to SciChart which balance performance with accuracy and in our own internal testing there should be no discernible difference in the visual you get vs. the dataset you put in so long as you’ve used the correct settings.

However, it is entirely possible you’ve stumbled across a defect.

Do you want to schedule a conference call with our tech team? That way you could show us the problems and we can give you advice.

Alternatively, if you have a waveform which does not render correctly, upload a solution to reproduce the problem and our engineers will investigate & reply back asap.

Visuals only show less than 800 and it was 0/1 data so over 1k datapoints should contain every pixel and that much data points it should shown only “rectangle” to end even with software rendered

I’m sorry but I don’t understand this part and it doesn’t make much sense. Can you clarify?

Right showing visuals is most important thing in my application with performance. with out resampling even its best whats resampling whats is in market but thats still resampled and can contain errors in wrong points, like it show in that image what i sended, “it looks” “ok” but not OK.

Again, let’s schedule a call or please send code to reproduce the problem you’re seeing and our engineers will take a look

Best regards,

Andrew

- Andrew Burnett-Thompson answered 3 years ago

- last edited 3 years ago

- You must login to post comments

i just used your own examples explorer opened that digital analysizer performance demo and set software rendering. it showed that whats in those pictures. havent edited any data, just modify those graphic settings to software. so theres no point to send code =).

iam on summer holidays next 3 weeks we can shedule a call after my holidays.

- Ime Parsaa answered 3 years ago

- You must login to post comments

yes the second one looks correct. i like to use strokethickness 1 and no resampling in any point. if you resample those data in screen yes there probably has in chart but not in screen… and there can be errors in that point as that software image showd.

- Ime Parsaa answered 3 years ago

I’d advise you to use the setting I suggested: FastLineRenderableSeries.ResamplingPrecision. This will achieve the desired result and still be able to draw 32/64 billion points. That’s a world record by the way! No-one else on the world can achieve SciChart’s WPF chart visualisation performance. At SciChart we make a high quality and precision piece of software. Our customers rate us very highly (4.85/5 stars) and our software has won prestigious awards. If you or anyone else finds an issue or error in our software you can contact us at any time. Our friendly, helpful team will be glad to resolve it. Finally we do have consultancy services available and some of our customers with the biggest performance demands such as Formula One or medical use our consultants to fine-tune SciChart for their apps. Hope that helps. Have a good day Pasi!

I’d advise you to use the setting I suggested: FastLineRenderableSeries.ResamplingPrecision. This will achieve the desired result and still be able to draw 32/64 billion points. That’s a world record by the way! No-one else on the world can achieve SciChart’s WPF chart visualisation performance. At SciChart we make a high quality and precision piece of software. Our customers rate us very highly (4.85/5 stars) and our software has won prestigious awards. If you or anyone else finds an issue or error in our software you can contact us at any time. Our friendly, helpful team will be glad to resolve it. Finally we do have consultancy services available and some of our customers with the biggest performance demands such as Formula One or medical use our consultants to fine-tune SciChart for their apps. Hope that helps. Have a good day Pasi! yes and thats lie if you say you draw 32 billion points in that time on screen… in that example where you draw those you are using resampled data and those are not drawed in screen because most of lines is in scrollbar and out of viewport area. so no drawing in screen.. can you show me example where you draw all those difrent series in single viewport area?

yes and thats lie if you say you draw 32 billion points in that time on screen… in that example where you draw those you are using resampled data and those are not drawed in screen because most of lines is in scrollbar and out of viewport area. so no drawing in screen.. can you show me example where you draw all those difrent series in single viewport area? There’s no lie here. It’s pretty easy to resize the channels, try SHIFT+MOUSEWHEEL until they are all in view. Now select point count = 1 Billion per series, and click LOAD. You can view all the channels on screen, all the data is shown, zoom all the way in, and zoom all the way out. I recorded a video here to demonstrate this and the Resampling Precision property how it affects visual output. https://www.youtube.com/watch?v=DinJx8BgMXE. If you think what we’ve done is impossible, well, that’s what we do. Our team is made of highly skilled engineers and we make impossible datavisualisation projects possible. Thanks!

There’s no lie here. It’s pretty easy to resize the channels, try SHIFT+MOUSEWHEEL until they are all in view. Now select point count = 1 Billion per series, and click LOAD. You can view all the channels on screen, all the data is shown, zoom all the way in, and zoom all the way out. I recorded a video here to demonstrate this and the Resampling Precision property how it affects visual output. https://www.youtube.com/watch?v=DinJx8BgMXE. If you think what we’ve done is impossible, well, that’s what we do. Our team is made of highly skilled engineers and we make impossible datavisualisation projects possible. Thanks! yep i noticed you do impossible things, things what NEVER should do when you visual data… Zooming out of that digital performance demo drops whole data off in visible range so… and there was multiple other problems too so we decided to go another product and no thanks for this product. if you dont know how to make data visualization correctly i think you should not do it at all..

yep i noticed you do impossible things, things what NEVER should do when you visual data… Zooming out of that digital performance demo drops whole data off in visible range so… and there was multiple other problems too so we decided to go another product and no thanks for this product. if you dont know how to make data visualization correctly i think you should not do it at all..

- You must login to post comments

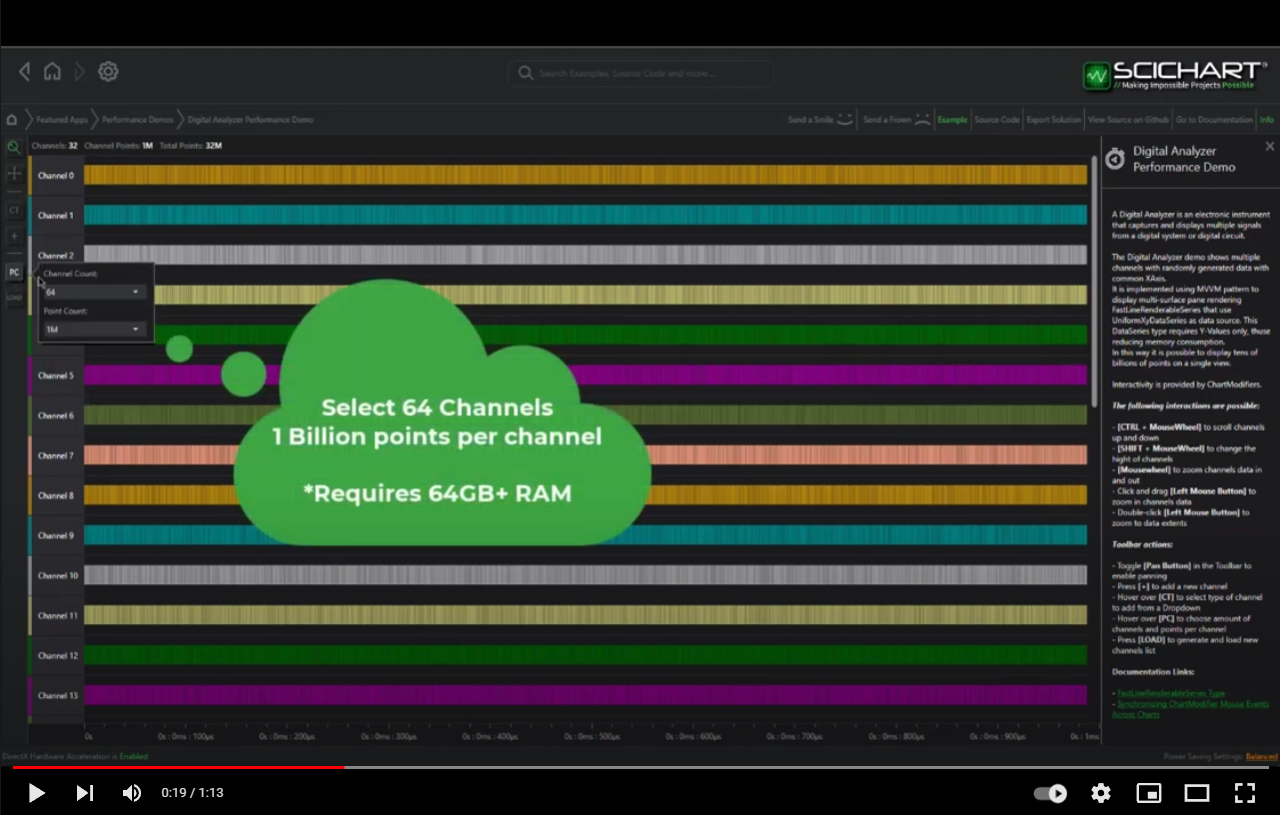

On the left there are controls to enable the number of channels and the number of points

Try selecting 32 Channels & 100M points per channel. This will generate and display 100M points.

Try it again with 32 or 64 Channels and 1 Billion points per channel and this will generate and display 32 pr 64 Billion points (requires 32GB – 64GB RAM for these tests)

Here’s a video showing how to use the controls.

I suggest you do not turn the Hardware-accelerated rendering to software or set resampling to off on the Examples suite but instead leave the defaults as this will degrade performance.

Once you have created the big dataset, try the controls on the left to zoom and pan the chart. You should find that you can zoom into all the data, and zoom all the way out. Use Mousewheel to zoom in/out and Double-click to reset the zoom. Finally you can scroll up & down in the listview to see all the channels or use SHIFT+MOUSEWHEEL to resize channels so all are viewable on scree.

If you want to know more about performance tips & tricks and how to get the best from SciChart WPF, please see this link. Finally if you have any questions about SciChart WPF performance, feel free to ask!

Hope this helps!

Best regards,

Andrew

- Andrew Burnett-Thompson answered 3 years ago

- last edited 3 years ago

- You must login to post comments

so you say it’s peformance problem when cleary its graphical problem when it gives wrong directinal lines and leave cap between lines, that small area contains 1million points like your example state, but Visuals only show less than 800 and it was 0/1 data so over 1k datapoints should contain every pixel and that much data points it should shown only “rectangle” to end even with software rendered… thats not performance problem in that point is just some wrong made rendering pipeline/or it’s resampled in shader state too? Right showing visuals is most important thing in my application with performance. with out resampling even its best whats resampling whats is in market but thats still resampled and can contain errors in wrong points, like it show in that image what i sended, “it looks” “ok” but not OK.

- Ime Parsaa answered 3 years ago

- You must login to post comments

Please login first to submit.